Why John Steinbach’s $281 Electric Bill Is Fueling China’s AI

From Manassas to Guizhou: The Geography of Compute

Three things happened in three places at the same moment.

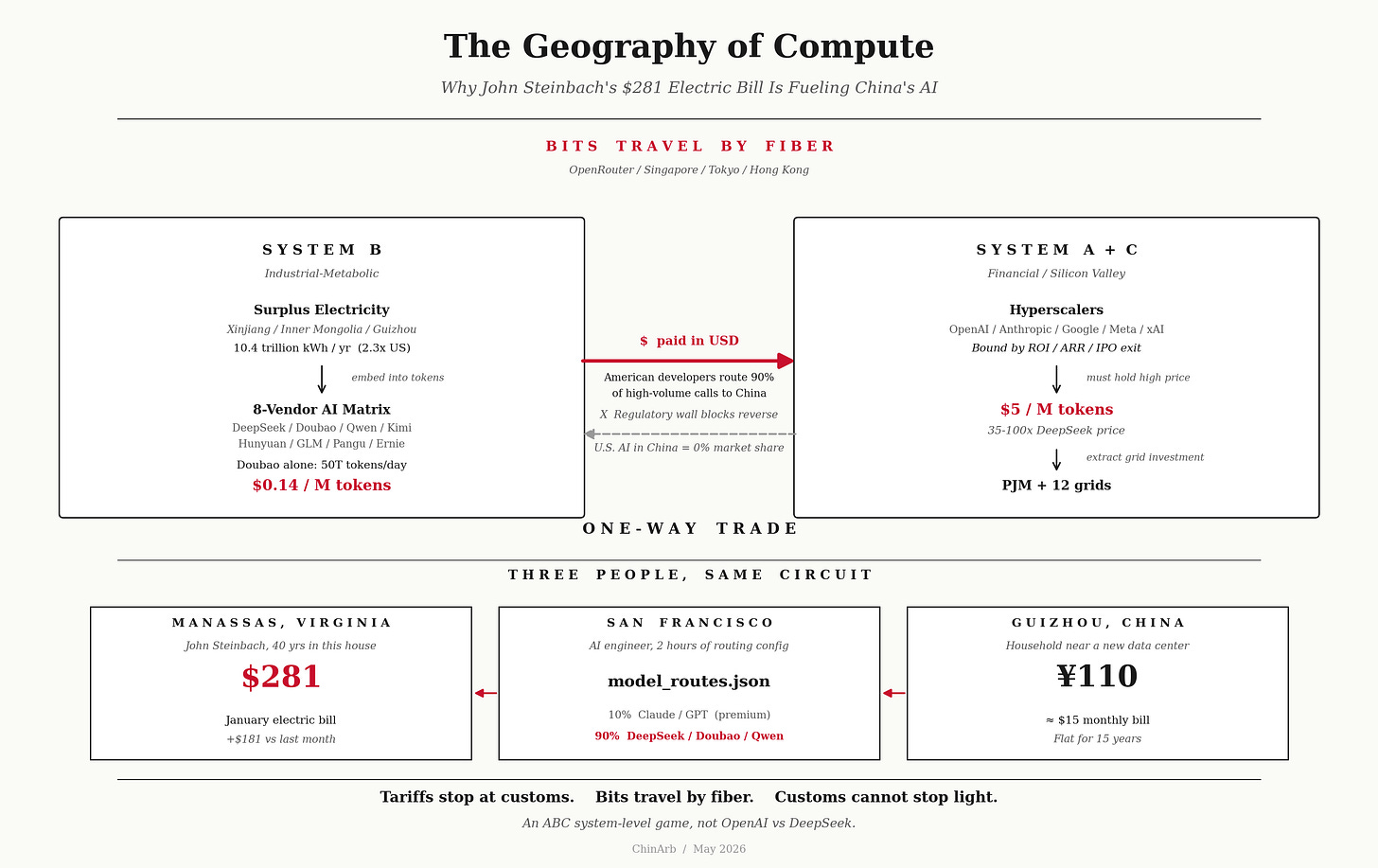

San Francisco, 3 PM. An AI engineer is writing model_routes.json. The most expensive client conversations — emotional support, medical explanation, legal analysis — go to Claude Opus 4.7 at $5/$25 per million tokens. Document summaries route to GPT-5.5 at $5/$30. Everything else flows to the Chinese matrix: code completion to DeepSeek V4 Flash at $0.14/$0.28; long documents to Kimi K2.5; product copy, SEO, email drafts to Doubao 1.5 Lite at ¥0.3 per million — cheaper than DeepSeek; tier-one customer service on MiniMax, nearly free; private deployment fallback on open-source Qwen at zero cost. The configuration takes him two hours. He doesn’t think of it as a geopolitical event — he’s optimizing.

Manassas, Virginia. Pre-dawn. John Steinbach opens his January electric bill. $281. He’s lived in this house 40 years and never seen a number like this. He doesn’t know how much higher next month will go.

Qianjiang prefecture, Guizhou, China. Same day. An ordinary household’s meter reads 200 kWh for the month. Bill: ¥110, about $15. The number hasn’t moved much in 15 years.

Not Just DeepSeek — The Whole Matrix Is Pressing

What the San Francisco engineer just did is what Apple did in Foxconn in 2005.

High-margin work stays in America. The high-volume, thin-margin work moves to China. What’s moved isn’t an assembly line — it’s token inference. The Chinese factory isn’t in Guangdong; it’s in Xinjiang, Inner Mongolia, Guizhou — wherever the electricity is cheap.

And Chinese AI isn’t just DeepSeek.

Doubao (ByteDance) processes over 50 trillion tokens daily, holds 46.4% of China’s public-cloud LLM API market. Tongyi Qianwen (Alibaba) holds 27%, the most-downloaded open-weight model on HuggingFace globally. Ernie (Baidu) holds 17%. DeepSeek owns the open-source coding crown. Kimi reigns over long-context. Hunyuan does video. GLM does Agent. Huawei’s Pangu is 718B parameters, trained entirely on Ascend chips. Add LongCat, Spark, MiMo, Step — eight tier-one players, dozens of tier-two, all running, all selling.

America: OpenAI, Anthropic, Google, Meta, xAI. Five.

China can support this many because electricity is essentially free (relative to the U.S.). America can only support five because each one fights for grid capacity, capital returns, and Wall Street’s ROI patience.

Doubao’s daily 50 trillion tokens — more than OpenAI, Anthropic, and Google AI Studio combined.

System B’s Training Ground: Forge Inside, Then Release

China’s domestic AI market has long since been competed down to zero margin. Doubao at ¥0.3. GLM free. DeepSeek subsidized through cloud platforms. Qwen open-sourced at $0. Eight tier-one players knife-fighting until everything’s beneath the floor.

Closed market plus zero internal margin pushes Chinese vendors offshore.

This is System B’s training-ground logic — use a market that’s closed externally and brutal internally to forge vendors hard, then release them. Cars walked this path (BYD, Chery, Geely now sweeping Southeast Asia and Europe). Solar walked it (the survivors took 80% of global capacity). Batteries, appliances, phones — same script.

AI is the latest installment.

The largest AI-paying customer offshore?

The United States.

American developers pay in dollars, in full, monthly. Cursor, Replit, AI startups across San Francisco — all calling Chinese models daily through OpenRouter, Together AI, Fireworks.

The reverse?

ChatGPT is blocked in China. Claude API is inaccessible. So is Gemini. OpenAI, Anthropic, Google — combined market share in mainland China: zero.

Chinese AI flows to American customers, settled in dollars, through fiber, with no customs.

American AI flows to China — blocked at the regulatory wall, not a single dollar collected.

In the manufacturing era, at least American iPhones could still sell in China. In the AI era, U.S. AI market share in China is 0%.

This isn’t a 1:1 copy of Foxconn. It’s Foxconn plus one-way market closure. More asymmetric than manufacturing ever was.

The Cut for Ordinary People: The Electric Bill Behind the AI Boom

The AI Boom is the central narrative of this U.S. equity bull market. Nasdaq highs, Magnificent 7 valuations, hyperscaler capex — all built on “AI changes everything.”

Electricity does not come out of PowerPoint.

Data centers consume nearly half of new U.S. electricity capacity. Residential power prices rose 33% from 2019 to 2025. Single-year increase in 2025 hit 10.5% — among the fastest in a decade (NEADA).

EIA’s April 2026 federal report: U.S. utilities disconnected residential electricity 13.4 million times in 2024 and sent 94.9 million final shutoff notices (143 million residential customers nationwide — two-thirds received at least one warning). 3.5 million households lost power, projected at 4 million for 2025. The 21.5 million low-income households now spend 8.6% of income on energy — nearly triple the rate for other households.

In the same year utilities posted record profits of $54 billion. American Electric Power’s CEO took home $36.6 million.

Consumer Reports put Manassas’s John Steinbach and his $281 bill on its March 2026 cover. Same survey: 78% of Americans worry data centers are pushing up their electric bills. Virginia survey: three-quarters of voters blame data centers for rising costs.

Harvard Environmental Law Review put it plainly: residents are subsidizing AI and data centers.

Whichever state hosts the data center sees the steepest power-bill increases. Regional grids (PJM covers 13 states) see wholesale procurement costs surge — most of which gets passed to residents through regulator-approved rate hikes.

That’s the inside squeeze.

The outside squeeze arrives at the same time. DeepSeek $0.14 vs OpenAI $5 — a 35x to 100x price gap. Hyperscalers can’t match the price (it would destroy ROI) and can’t ignore it (they’d lose share). Capital markets don’t allow ROI collapse, so prices stay high — and high prices require extracting more grid investment from residents. The loop closes.

Every San Francisco developer who replaces Claude with DeepSeek nudges the Manassas family’s bill up another notch.

Meanwhile that Guizhou family’s electricity bill hasn’t moved much in 15 years. China’s residential rate moved from ¥0.51/kWh in 2010 to ¥0.55 in 2025 — increase below inflation. China’s 2025 total electricity consumption broke 10 trillion kWh — 2.3 times America’s 4.5 trillion.

Industrial users subsidize residential users — China’s structural arrangement, opposite of America’s.

That family’s town saw a data center break ground three years ago. They didn’t quite understand why it was built so far from Shanghai or Beijing.

Later they realized: this town is close to two things — cheap electricity and surplus electricity.

Guizhou, Inner Mongolia, Xinjiang, Ningxia — surplus wind, surplus solar, surplus hydro from the western corridor — these electrons used to have nowhere to go, because electricity can’t be shipped on a boat.

Now they have somewhere to go: into tokens.

That family doesn’t know any of this. They just know their bill hasn’t risen, the air conditioner can stay on, their college-aged son doesn’t grumble about utilities. They are riding the export of their own electricity. They don’t know they are.

And John Steinbach in Manassas doesn’t know either: a portion of his extra $181 has flowed into the same global energy-metabolism circuit that keeps Guizhou’s bill flat.

Two ordinary people. Same planet. Two opposite energy regimes.

One is collecting. One is paying.

The Cut for the Tech Crowd: System C’s Real Position

Silicon Valley’s self-narrative: we’re forking from Wall Street and the Pentagon, building our own grid, our own compute, our own protocol communities.

Half right. Half fatally wrong.

Right half: System C is detaching from System A. Stargate, xAI Colossus, Anthropic’s private grid plans — these are physical decoupling. On frontier research, reasoning frontiers, and agentic capability, System C still leads. GPT-5, Claude Opus 4.7, Gemini 3 Pro hold SOTA — and won’t lose it short term.

Wrong half: System C is still embedded in System A’s capital-return constraint.

OpenAI valued at $150B. Anthropic at $61B. That money is Wall Street’s, sovereign-fund money, ARR-model money. Every dollar assumes IPO or strategic-buyer exit.

System B’s AI vendors are different. Doubao is ByteDance’s internal asset. DeepSeek is a research project under hedge fund High-Flyer (Hillhouse-affiliated). Qwen is Alibaba’s strategic gateway. None needs an IPO. None needs ARR. None needs to answer to shareholders.

C raises A’s money but competes against B’s cost function.

Match the price — valuation drops. Don’t match it — market share drops. System C thinks it’s the most autonomous of A/B/C. It’s actually the middle layer being squeezed by A’s capital market and B’s physical base simultaneously.

I have argued in The Great Fork — America is splitting internally, A vs C, B watching. What that piece didn’t say: B isn’t just watching. B is the dual beneficiary. B is harvesting both C’s developer market (C’s customers using B’s APIs) and A’s residential market (A’s residents paying electricity bills to feed C’s domestic compute, which can’t beat B’s prices).

System C’s Silicon Spacecraft wants to launch alone. The fuel for that launch — energy, minerals, low-cost compute — sits in B’s hands.

The Cut for Investors: A Valuation-Paradigm Switch

This is no longer news. Bloomberg has named China’s AI sector “the Pinduoduo of AI.” Wall Street analysts publicly question the ROI sustainability of U.S. AI capex. Marc Andreessen called it “AI’s Sputnik moment.”

But the market hasn’t fully priced it in.

DeepSeek V4 Pro costs 1/7 of Claude Opus 4.7 and 1/6 of GPT-5.5. The Flash variant goes harder — 35 to 100 times cheaper than top U.S. models.

And performance gap doesn’t form a defense line — DeepSeek V3’s HumanEval score of 82.6% already surpasses GPT-4o and Claude 3.5. In code completion, document summary, tier-one customer service, product copy, SEO, email drafts, ticket triage — the high-frequency, low-risk workloads that account for 80%+ of enterprise token consumption — the Chinese matrix has reached substitutability. Frontier reasoning, medical/legal decision-making, long-chain agentic workflows — hyperscalers still hold these, but they account for low token volume, high unit price, and slow growth.

I have argued in Neocloud Death Spiral — GPU depreciation plus high-rate leverage plus grid lag, three pressures hitting hyperscalers simultaneously. Now add a fourth: Chinese low-cost tokens have nailed the global token-price ceiling beneath their marginal cost.

Capital markets need to do one thing — switch from the “AI SaaS premium model” to the “compute commoditization model.”

This is not an emotional pullback. It’s a valuation-paradigm recalibration — from “perpetually growing SaaS” to “compute compressed toward marginal cost.” SaaS multiples sit at 15-30x ARR. Compute commoditization multiples sit at 3-8x.

Not a valuation crash. A change in valuation models.

And model changes are never smooth — they happen on a single earnings day, when everyone simultaneously realizes the growth curve has been cut from below by the Chinese matrix, and multiples reprice the next morning.

The Cut for the Policy Crowd: The Trade War Has Already Failed

Trump has imposed four rounds of tariffs in three years. China’s trade surplus with the U.S. has grown from $400 billion to $500 billion.

Tariff weapons were built for the atoms era. They require borders, form, country-of-origin documentation, HS codes, customs declarations.

Tokens have none of these.

What’s actually breaking System A’s arsenal isn’t the token itself — it’s path complexity exceeding regulatory capacity:

Layer 1 — API call layer. A San Francisco developer reaches DeepSeek through OpenRouter, Together AI, or Fireworks. The request bounces through Singapore, Tokyo, Hong Kong edge nodes. Customs has nothing to seize.

Layer 2 — Weight distribution layer. Even if APIs are banned, Qwen, DeepSeek, GLM open weights see hundreds of thousands of legal downloads daily on HuggingFace. Can’t be sealed.

Layer 3 — Inference location layer. Even if weights are sealed, enterprises can rent GPU compute in Southeast Asia, Europe, the Middle East — run Chinese weights, send the output back to America.

Regulators must seal all three layers. Developers only have to bypass one.

The Trump administration’s official position — Energy Secretary Chris Wright in February: “Americans are not paying higher prices because of data centers.” Same period, Consumer Reports survey: 78% disagree. Pew survey: American concern about AI now exceeds enthusiasm. Maine just passed a moratorium on new data center construction. Two Republican commissioners on Georgia’s utility-regulatory commission were voted out in November.

The government denies. Voters aren’t buying.

China’s tariff weapon — the regulatory wall — works. It blocks one thing precisely: U.S. AI from entering the Chinese market.

System A’s tariffs only fire one way.

China’s electricity-export form has upgraded: from atoms (steel, batteries, solar panels) to bits (tokens, inference services). Bits cannot be tariffed.

The real problem for the policy crowd isn’t tariff failure — it’s that System A has no weapon designed for the bits battlefield. SWIFT can’t sanction token calls. Export controls can’t stop open-weight downloads. Chip restrictions: DeepSeek bypassed them with H800s plus algorithmic optimization.

System A is fighting this generation’s war with last generation’s weapons.

In One Dimension: An ABC System-Level Game, Not OpenAI vs DeepSeek

The U.S.-China AI competition looks like OpenAI vs DeepSeek.

It’s actually three systems with different physical bases and market structures, taking specific form on the AI track.

System A: open market, closed grid, capital demands ROI, AI services blocked from China by the regulatory wall. Result: high prices, share erosion, electricity costs passed to residents, valuations suspended.

System B: closed market, open grid, state-affiliated capital that doesn’t require ROI, AI services slipping through OpenRouter past every tariff. Result: low prices, expanding share, electricity exported as tokens, residential bills stable.

System C: thinks it’s detaching from A — actually trapped by A’s capital-return constraint and B’s physical base. Frontier still leads. Cost function already lost.

This isn’t a chip fight. Not a model fight. Not an algorithm fight.

It’s three systems’ physical metabolic styles colliding on the same table.

Whoever can run more tokens — using cheaper electricity, larger grid capacity, longer capital patience, more closed training markets — gets pricing power for the next round.

American ordinary people are paying electric bills for a structurally asymmetric race.

Wall Street is pricing on an outdated valuation model.

Silicon Valley is racing with hands tied by ROI against opponents who don’t need ROI.

Trump tariffs atoms. Beijing exports bits.

This isn’t winning or losing. It’s two physical metabolic styles shaping each other on the same track.

Tariffs stop at customs. Bits travel by fiber.

Not Closing

The San Francisco engineer drives home. On the radio, Trump threatens more tariffs. He smiles a little — he knows it doesn’t apply to him. What he buys isn’t a commodity. It’s a token.

In Manassas, John Steinbach calculates whether the air conditioner can stay off a little longer.

The Guizhou family turns on the TV, sips hot water, and watches the news scroll: “China’s AI Leads the World.”

Their electric bill won’t rise.

Because their electricity is being exported in a purer form.

Customs cannot stop light.

The other day I asked Qwen if they provide an offline version, like Claude.

The answer was yes. I asked how much I would have to pay to use it ...

Zero was the answer. Just felt ripped of my $20 a month for Claude... After coming from a slow and annoying Chat GPT ...

I'm starting to move where the democratisation of AI is: in China 😏

Why pay US billionaires to use their tools when I can get the same for free 🤷♂️ from a country we're I lived 4 years, and witnessed as really comfortable for the most, particularly the youths.

Claude? ChatGPT?

Better I stall & train straight into Qwen, Zhipu, moonshot or deepseek or the any other Chinese ones 🤗

Why get a Tesla when a BYD offer better for much less headache.

AI competition is shifting from a contest over model capability into a broader competition over energy, capital, market access, and institutional organization.

China’s low-cost models are effectively putting a ceiling on global token pricing. U.S. AI companies may not collapse immediately, but the valuation framework could shift from a 15–30x ARR SaaS multiple to a 3–8x commoditized compute multiple. This is the most striking judgment I took from @chinarb’s piece.

One correction I would add: it is true that American developers are increasingly using Chinese models while U.S. models have almost no access to the Chinese market. But the reason is not simply that U.S. models are being kept out by China’s regulatory firewall. It is also that Anthropic, Google, and OpenAI have chosen to restrict or close services to users in mainland China. That is deeply unfortunate.

China’s advantage is not merely that its models are cheaper. Its advantage lies in its ability to organize low-cost energy, cloud infrastructure, open-source ecosystems, application markets, and patient capital into a low-cost inference system.

The United States has an open market, but also a structure shaped by capital return requirements, high electricity prices, elevated valuations, and the pass-through of infrastructure costs to households. China has a more closed domestic market, brutal internal competition, lower electricity costs, longer capital patience, and a stronger willingness to export low-priced AI services outward. Silicon Valley sits in between: it still leads at the frontier of model capability, but it is increasingly constrained by U.S. capital markets and the physical cost structure of the American power system.

This is exactly where my argument in “The Great Partition of Global AI” becomes more relevant. The global AI system is not splitting into two fully isolated blocs, but it is being partitioned layer by layer. The top layer of frontier models, high-end chips, national-security applications, and sensitive data will become increasingly securitized. The middle layer of open-source models, enterprise tools, application frameworks, and developer workflows will remain partially connected. The bottom layer of inference, deployment, cloud routing, hardware adaptation, and cost optimization will become the real battlefield. John Steinbach’s $281 electricity bill is not just a local utility story. It is a symptom of how AI partition is moving from chips and models into power grids, capital costs, cloud pricing, and household bills.

This also explains why China’s AI position cannot be understood only through the lens of model benchmarks. China may still lag the United States at the very frontier of closed proprietary models, but it is building a different kind of advantage in the deployment layer: cheaper power, brutal domestic competition, open-weight model diffusion, cloud ecosystem bundling, hardware adaptation around Ascend and domestic chips, and a massive internal market where models are forced to become cheaper and more usable. In my framework, this is the lower and middle layer of the global AI partition. The U.S. may still dominate the frontier layer, but China is trying to shape the cost structure and deployment logic of the global inference layer. That is why the next phase of AI competition will not be decided only by who has the best model, but by who can turn intelligence into a low-cost, high-volume, globally routable utility.